Commercial real estate portfolios run on data. Energy consumption, equipment performance, maintenance schedules, occupancy patterns, ESG compliance — every building generates thousands of data points every hour. For most operations teams, that data flows into spreadsheets. Hundreds of them. Maintained manually. Reviewed weekly, if at all.

The tools most CRE operators use were never designed to act on data. They were designed to store and display it. Moving from reactive spreadsheet monitoring to genuinely autonomous operations requires a fundamentally different architecture — one built around AI agents rather than dashboards.

Below is what that shift looks like in practice, why it is happening now, and how commercial portfolios can move through the transition without disrupting existing infrastructure.

The spreadsheet is not the problem

The spreadsheet reveals something about the underlying system: humans are still the connective tissue between data and action.

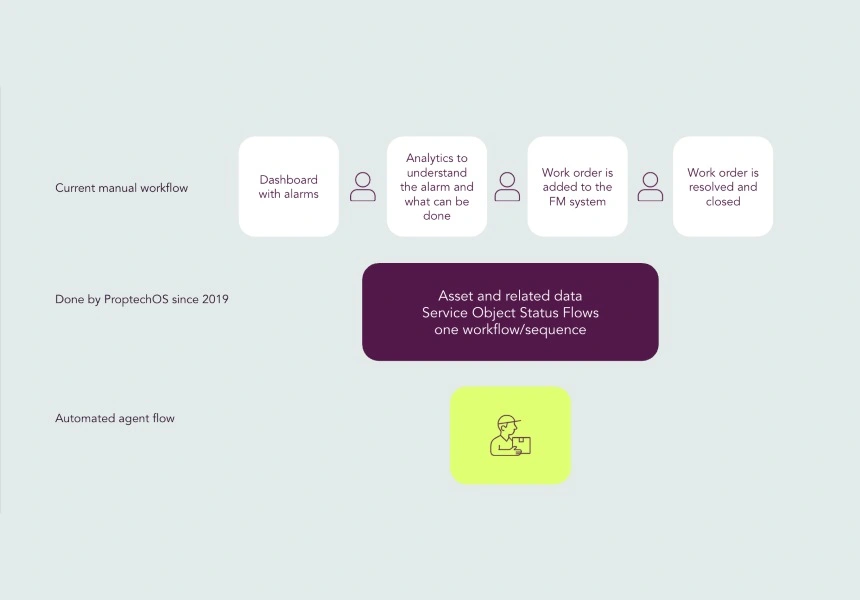

A facilities manager checks an energy report on Monday morning, identifies that HVAC performance degraded over the weekend, raises a work order by Tuesday, schedules an engineer for Thursday, and the issue is resolved by Friday. Five days of lag, manually coordinated across three systems, triggered by someone with enough context to know that a number on a spreadsheet meant something was wrong.

Multiply that across a portfolio of fifty or a hundred buildings and you have an operations model that is fundamentally human-bandwidth-constrained. The buildings generate more signal than the team can process. Issues that should be caught in hours are caught in days. Optimisation opportunities that should be acted on in real time are reviewed quarterly.

Building management systems were supposed to solve this — at least partially. A BMS is excellent at monitoring and control within a building. It is not designed to reason across systems, respond to context, or coordinate action without human instruction. You can see the fault. You cannot fix it without a person in the loop.

What AI agents actually do in a building

An AI agent is not an analytics tool and does not produce a report for a human to review. It observes conditions, reasons about them, decides on a course of action, and executes — autonomously, within defined parameters.

In a commercial building, that means an agent monitoring the HVAC system does not flag an anomaly and wait. It detects a developing fault, cross-references maintenance history and parts availability, determines whether a pre-emptive adjustment will defer the issue, makes the adjustment, and logs the decision for human review. The engineer sees an outcome, not a problem to diagnose.

An energy agent does not report peak consumption after the fact. It monitors real-time load data, weather forecasts, and energy pricing, anticipates a demand spike two hours ahead, redistributes loads across systems to avoid peak tariffs, and documents exactly what it did and why.

A compliance agent does not generate a monthly ESG report from a data export. It continuously reconciles consumption, occupancy, and equipment data against reporting standards, flags deviations as they occur, and prepares structured records that are audit-ready at any point in time.

In operational terms, agentic proptech means the action layer becomes automated — not just the reporting layer.

Why this is possible now and not five years ago

Three things converged to make building-level AI agents viable.

Open data standards. Buildings have historically been siloed. BMS data lived in proprietary formats. Sensor data was trapped in hardware ecosystems. Reasoning across systems was technically impossible because the systems could not talk to each other. The emergence of open ontologies — particularly the RealEstateCore standard — created a common data language for buildings. A HVAC reading, an occupancy signal, and an energy meter reading can now be understood by the same AI model because they are described in a shared schema.

Edge and cloud infrastructure. Agents need to read and write data in real time. The cloud and edge computing infrastructure required to support low-latency agent execution at building scale only became broadly available and affordable in the past few years. What previously required significant on-premise hardware investment is now achievable through standard cloud connectivity.

Large language model reasoning. The reasoning capability required to interpret ambiguous building signals — “is this temperature reading a sensor fault or a genuine thermal anomaly?” — has improved dramatically. Modern AI agents can operate with contextual judgement, not just rule-based triggers.

Together, these three shifts mean that the building operating system concept is no longer architectural aspiration. It is deployable infrastructure.

The four stages of operational maturity

Portfolios do not move from spreadsheets to autonomous operations in a single step. In practice, the transition follows a recognisable progression.

Stage 1: Manual monitoring. Data is collected — from BMS, meters, sensors — and displayed in dashboards or exported to spreadsheets. Humans review the data, identify issues, and initiate action. Response time is measured in days. Most commercial portfolios sit here today.

Stage 2: Automated alerting. Rule-based thresholds trigger notifications. A temperature exceeds 26°C, an alert fires. A consumption reading crosses a target, an email goes out. Response time compresses to hours but still requires human decision-making. Most building management system deployments operate at this stage.

Stage 3: Assisted decision-making. AI models analyse patterns and surface recommendations. An energy management platform suggests load-shifting strategies. A predictive maintenance tool flags components approaching failure. Humans still make the call, but they are better informed and faster. Response time moves to minutes for straightforward issues.

Stage 4: Autonomous operation. AI agents operate within defined permission boundaries without human initiation. They observe, reason, act, and log. Humans review outcomes, audit decisions, and adjust the permission framework. Response time is real time. Portfolios with a mature agentic proptech deployment operate here.

The jump from stage 3 to stage 4 is primarily a governance decision, not a technology one. The question is how to structure the permission boundaries, audit trails, and escalation logic that make autonomous action safe to deploy at portfolio scale.

The role of the digital twin

Autonomous agents require a live representation of the building they are operating in. An agent that adjusts HVAC setpoints needs to know the building layout, the equipment configuration, the occupancy schedule, and the historical performance baseline of every component it controls.

A digital twin serves that function: a live, structured model of the building that agents can read from and write to. Without it, agents operate without context. With it, they have a ground truth to reason against.

A digital twin is not a 3D visualisation tool, though it may include one. In the context of proptech software built for autonomous operations, it is a data model — continuously synchronised with physical building conditions — that represents spaces, equipment, relationships, and real-time state. Every agent decision is made in the context of that model and recorded against it.

The speed of digital twin deployment is often cited as a barrier. In practice, a structured onboarding process using an open ontology like RealEstateCore can move a building from raw data to a connected twin in 72 hours rather than the months-long timelines associated with legacy implementations.

What changes for the operations team

The transition to autonomous operations reorganises what the operations team does — it does not eliminate them.

Under manual monitoring, senior engineers spend significant time on routine interpretation and coordination — reviewing dashboards, triaging alerts, raising tickets, following up on work orders. These are high-effort, low-judgement activities that consume attention better spent on complex problems and strategic decisions.

Under autonomous operations, routine observation and action is handled by the agent layer. The operations team shifts toward agent supervision: reviewing decision logs, adjusting permission frameworks, identifying edge cases where agent logic needs refinement, and managing escalations. The work becomes higher-judgement and more strategic.

The core argument here is leverage, not headcount. A team managing a hundred-building portfolio cannot meaningfully monitor every building simultaneously. An agent team can. The human team’s role becomes oversight of the agent layer rather than direct management of every building.

The ProptechOS Agency model makes this operational: pre-built agents for energy management, fault detection, maintenance scheduling, and ESG reporting that can be deployed across a portfolio and supervised through a unified interface.

Energy management as the entry point

For most portfolios, energy is the logical starting point for autonomous operations — for three reasons.

The data is already there. Energy metering infrastructure is mandatory in most jurisdictions for ESG and regulatory compliance. The data exists. The question is what to do with it beyond reporting.

The economic case is immediate. Energy represents 25–40% of operating costs in a typical commercial building. An energy management solution that shifts load, avoids peak tariffs, and optimises equipment scheduling typically delivers measurable returns within months.

The risk profile is manageable. Energy optimisation agents can be deployed with conservative permission boundaries — recommending actions before executing them, requiring human approval above a defined threshold — and progressively granted more autonomy as the team builds confidence in the decision logic.

From energy, portfolios typically expand into operational efficiency use cases — predictive maintenance, automated work order generation, occupancy-driven ventilation control — where the data infrastructure built for energy management gets repurposed for broader facility operations.

Integration with existing systems

A consistent concern among CRE operations teams is integration complexity. Most large portfolios have heterogeneous BMS infrastructure — multiple vendors, different vintages, varying protocol support. Overlaying an AI agent layer on top of all of it sounds expensive and disruptive.

In practice, the integration architecture for modern building operating system platforms is designed to abstract away that complexity. Connection via BACnet, Modbus, MQTT, or cloud APIs means agents can ingest data from legacy systems without requiring those systems to be replaced. The BMS continues doing what it does. The agent layer sits above it, reading its outputs and — where permissions allow — writing back commands.

A BMS replacement project is expensive, high-risk, and multi-year. An agentic proptech deployment is additive, lower-risk, and faster to value. You are not replacing existing infrastructure — you are adding a reasoning and action layer on top of it.

Governance, permissions, and human-in-the-loop

Autonomous does not mean uncontrolled. The governance architecture of an agent deployment is as important as the technical architecture.

Every agent operating in a ProptechOS environment works within a defined permission boundary: what it can read, what it can write, what actions it can execute autonomously, what actions require human approval, and what conditions trigger escalation. These boundaries are set by the operations team and adjusted as confidence in the agent’s decision logic grows.

Every autonomous action is logged with full reasoning traceability: what signal triggered the action, what reasoning led to the decision, what the expected and actual outcome was. This audit trail is central to the governance model that makes autonomous operation acceptable to building owners, insurers, and regulators.

Human-in-the-loop is a design choice. Some decisions — major equipment interventions, tenant-facing changes, actions above a defined cost threshold — should always require human approval. The agent layer handles routine optimisation. Humans handle consequential judgement calls. The boundary between them is configurable.

From monitoring to acting: the practical starting point

The gap between where most CRE operations teams are today — reviewing spreadsheets, managing email alerts, manually coordinating work orders — and where autonomous operations are possible is smaller than it appears. The data is often already being collected. The integration with existing BMS is straightforward. The infrastructure requirements are far lighter than legacy proptech implementations suggested.

The practical starting point is usually a single use case — energy peak management or fault detection — deployed on a subset of the portfolio with conservative permission boundaries. Measurable outcomes are visible within weeks. The permission framework expands. Use case coverage grows. The operations team gradually shifts its time from monitoring to supervision.

The transition from Excel to agents is an operational model change. The technology — proptech software with a real agent execution layer, a live digital twin, and open data standards — exists and is deployable today. The question is whether the operations model should keep up.